# Run this cell to set up packages for lecture.

from lec05_imports import *

We'll continue working with the same data from last time.

states = bpd.read_csv('data/states.csv')

states = states.assign(Density=states.get('Population') / states.get('Land Area'))

states = states.set_index('State')

states

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| ... | ... | ... | ... | ... | ... | ... |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

50 rows × 6 columns

Key concept: Querying.

We want to create a DataFrame consisting of only the states whose 'Region' is 'West'. How do we do that?

# This DataFrame only contains rows where the 'Region' is 'West'!

only_west = states[states.get('Region') == 'West']

only_west

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| California | West | Sacramento | 39538223 | 155779 | Democratic | 253.81 |

| ... | ... | ... | ... | ... | ... | ... |

| Utah | West | Salt Lake City | 3271616 | 82170 | Republican | 39.82 |

| Washington | West | Olympia | 7705281 | 66456 | Democratic | 115.95 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

13 rows × 6 columns

🤯 What just happened?

True or False.bool is a data type in Python, just like int, float, and str. True or False.5 == 6

False

type(5 == 6)

bool

9 + 10 < 21

True

There are several types of comparisons we can make.

| symbol | meaning |

|---|---|

== |

equal to |

!= |

not equal to |

< |

less than |

<= |

less than or equal to |

> |

greater than |

>= |

greater than or equal to |

When comparing an entire Series to a single value, the result is a Series of bools (via broadcasting).

states

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| ... | ... | ... | ... | ... | ... | ... |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

50 rows × 6 columns

states.get('Region') == 'West'

State

Alabama False

Alaska True

Arizona True

...

West Virginia False

Wisconsin False

Wyoming True

Name: Region, Length: 50, dtype: bool

To select only certain rows of states:

Trues (keep) and Falses (toss), usually by making a comparison.states[sequence_goes_here].states[states.get('Region') == 'West']

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| California | West | Sacramento | 39538223 | 155779 | Democratic | 253.81 |

| ... | ... | ... | ... | ... | ... | ... |

| Utah | West | Salt Lake City | 3271616 | 82170 | Republican | 39.82 |

| Washington | West | Olympia | 7705281 | 66456 | Democratic | 115.95 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

13 rows × 6 columns

states[states.get('Region') == 'Pacific Northwest']

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State |

Key concept: Shape of a DataFrame.

'Party' is 'Republican'.only_rep = states[states.get('Party') == 'Republican']

only_rep

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| ... | ... | ... | ... | ... | ... | ... |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

31 rows × 6 columns

.shape returns the number of rows and columns in a given DataFrame..shape is not a method, so we don't use parentheses..shape is an attribute, as it describes the DataFrame.[]: .shape[0] for rows..shape[1] for columns.only_rep.shape

(31, 6)

# Number of rows.

only_rep.shape[0]

31

# Number of columns.

only_rep.shape[1]

6

# What proportion of US states are Republican?

only_rep.shape[0] / states.shape[0]

0.62

Key concepts: Working with the index. Combining multiple steps.

'Midwest'.'Land Area' in descending order.midwest = states[states.get('Region') == 'Midwest']

midwest

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Illinois | Midwest | Springfield | 12812508 | 55519 | Democratic | 230.78 |

| Indiana | Midwest | Indianapolis | 6785528 | 35826 | Republican | 189.40 |

| Iowa | Midwest | Des Moines | 3190369 | 55857 | Republican | 57.12 |

| ... | ... | ... | ... | ... | ... | ... |

| Ohio | Midwest | Columbus | 11799448 | 40861 | Republican | 288.77 |

| South Dakota | Midwest | Pierre | 886667 | 75811 | Republican | 11.70 |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

12 rows × 6 columns

midwest_sorted = midwest.sort_values(by='Land Area', ascending=False)

midwest_sorted

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Kansas | Midwest | Topeka | 2937880 | 81759 | Republican | 35.93 |

| Minnesota | Midwest | Saint Paul | 5706494 | 79627 | Democratic | 71.67 |

| Nebraska | Midwest | Lincoln | 1961504 | 76824 | Republican | 25.53 |

| ... | ... | ... | ... | ... | ... | ... |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

| Ohio | Midwest | Columbus | 11799448 | 40861 | Republican | 288.77 |

| Indiana | Midwest | Indianapolis | 6785528 | 35826 | Republican | 189.40 |

12 rows × 6 columns

midwest_sorted.get('State').iloc[0]

--------------------------------------------------------------------------- KeyError Traceback (most recent call last) Cell In[18], line 1 ----> 1 midwest_sorted.get('State').iloc[0] File ~\Anaconda3\lib\site-packages\babypandas\utils.py:20, in suppress_warnings.<locals>.wrapper(*args, **kwargs) 18 with warnings.catch_warnings(): 19 warnings.simplefilter("ignore") ---> 20 return func(*args, **kwargs) File ~\Anaconda3\lib\site-packages\babypandas\bpd.py:327, in DataFrame.get(self, key) 325 if any(mask): 326 k = [key] if isinstance(key, str) else key --> 327 raise KeyError("{} not found in columns".format(np.array(k)[mask])) 329 f = _lift_to_pd(self._pd.get) 330 return f(key=key) KeyError: "['State'] not found in columns"

.get because .get is only for columns, and there is no column called 'State'. 'State' is the index of the DataFrame. .index..shape, this is an attribute of the DataFrame, not a method. Don't use parentheses. [].midwest_sorted.index

Index(['Kansas', 'Minnesota', 'Nebraska', 'South Dakota', 'North Dakota',

'Missouri', 'Michigan', 'Iowa', 'Illinois', 'Wisconsin', 'Ohio',

'Indiana'],

dtype='object', name='State')

midwest_sorted.index[0]

'Kansas'

It is not necessary to define the intermediate variables midwest and midwest_sorted. We can do everything in one line of code.

When solving a multi-step problem, develop your solution incrementally. Write one piece of code at a time and run it.

# Final solution, which you should build up one step at a time.

states[states.get('Region') == 'Midwest'].sort_values(by='Land Area', ascending=False).index[0]

'Kansas'

# You can space your code out like this if needed.

(

states[states.get('Region') == 'Midwest']

.sort_values(by='Land Area', ascending=False)

.index[0]

)

'Kansas'

Which expression below evaluates to the total population of the 'West'?

A. states[states.get('Region') == 'West'].get('Population').sum()

B. states.get('Population').sum()[states.get('Region') == 'West']

C. states['West'].get('Population').sum()

D. More than one of the above.

...

Ellipsis

Key concepts: Queries with multiple conditions. Selecting rows by position.

& for "and" and | for "or".&: All conditions must be true.|: At least one condition must be true.(parentheses) around each condition!and and or here! They do not behave as you'd want.states[(states.get('Party') == 'Republican') & (states.get('Region') == 'South')]

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Arkansas | South | Little Rock | 3011524 | 52035 | Republican | 57.87 |

| Florida | South | Tallahassee | 21538187 | 53625 | Republican | 401.64 |

| ... | ... | ... | ... | ... | ... | ... |

| Tennessee | South | Nashville | 6910840 | 41235 | Republican | 167.60 |

| Texas | South | Austin | 29145505 | 261232 | Republican | 111.57 |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

13 rows × 6 columns

# You can also add line breaks within brackets.

states[(states.get('Party') == 'Republican') &

(states.get('Region') == 'South')]

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Arkansas | South | Little Rock | 3011524 | 52035 | Republican | 57.87 |

| Florida | South | Tallahassee | 21538187 | 53625 | Republican | 401.64 |

| ... | ... | ... | ... | ... | ... | ... |

| Tennessee | South | Nashville | 6910840 | 41235 | Republican | 167.60 |

| Texas | South | Austin | 29145505 | 261232 | Republican | 111.57 |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

13 rows × 6 columns

& and | operators work element-wise!¶(states.get('Party') == 'Republican')

State

Alabama True

Alaska True

Arizona True

...

West Virginia True

Wisconsin True

Wyoming True

Name: Party, Length: 50, dtype: bool

(states.get('Region') == 'South')

State

Alabama True

Alaska False

Arizona False

...

West Virginia True

Wisconsin False

Wyoming False

Name: Region, Length: 50, dtype: bool

(states.get('Party') == 'Republican') & (states.get('Region') == 'South')

State

Alabama True

Alaska False

Arizona False

...

West Virginia True

Wisconsin False

Wyoming False

Length: 50, dtype: bool

(

states[(states.get('Party') == 'Republican') &

(states.get('Region') == 'South')]

.sort_values(by='Population', ascending=False)

)

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Texas | South | Austin | 29145505 | 261232 | Republican | 111.57 |

| Florida | South | Tallahassee | 21538187 | 53625 | Republican | 401.64 |

| Georgia | South | Atlanta | 10711908 | 57513 | Republican | 186.25 |

| ... | ... | ... | ... | ... | ... | ... |

| Arkansas | South | Little Rock | 3011524 | 52035 | Republican | 57.87 |

| Mississippi | South | Jackson | 2961279 | 46923 | Republican | 63.11 |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

13 rows × 6 columns

How do we extract the first three rows of this DataFrame?

.take to select rows by position¶.take(sequence_of_integer_positions). This keeps only the rows whose positions are in the specified sequence (list/array)..iloc[] on a Series.(

states[(states.get('Party') == 'Republican') &

(states.get('Region')=='South')]

.sort_values(by='Population', ascending=False)

.take([0, 1, 2])

)

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Texas | South | Austin | 29145505 | 261232 | Republican | 111.57 |

| Florida | South | Tallahassee | 21538187 | 53625 | Republican | 401.64 |

| Georgia | South | Atlanta | 10711908 | 57513 | Republican | 186.25 |

.take(np.arange(3)) could equivalently be used in place of .take([0, 1, 2]).Write code to answer each question below.

'West' with the largest land area?'Northeast' have more land area than an average US state?'Midwest', 'South', and 'Northeast?

states[states.get('Region') == 'West'].sort_values(by='Land Area', ascending=False).get('Capital City').iloc[0]

2. How many states in the Northeast have more land area than an average US state?

states[(states.get('Region') == 'Northeast') &

(states.get('Land Area') > states.get('Land Area').mean())].shape[0]

3. What is the total population of the Midwest, South, and Northeast?

states[(states.get('Region') == 'Midwest') |

(states.get('Region') == 'South') |

(states.get('Region') == 'Northeast')].get('Population').sum()

Alternate solution to 3:

states.get('Population').sum() - states[states.get('Region') == 'West'].get('Population').sum()

...

Ellipsis

Key concept: Grouping by one column.

We can find the total population of any one region using the tools we already have.

states[states.get('Region') == 'West'].get('Population').sum()

78588572

states[states.get('Region') == 'Midwest'].get('Population').sum()

68985454

But can we find the total population of every region all at the same time, without writing very similar code multiple times? Yes, there is a better way!

.groupby¶Observe what happens when we use the .groupby method on states with the argument 'Region'.

states.groupby('Region').sum()

| Population | Land Area | Density | |

|---|---|---|---|

| Region | |||

| Midwest | 68985454 | 750524 | 1298.78 |

| Northeast | 57609148 | 161912 | 4957.49 |

| South | 125576562 | 868356 | 3189.37 |

| West | 78588572 | 1751054 | 881.62 |

These populations (for the 'West' and 'Midwest') match the ones we found on the previous slide, except now we get the populations for all regions at the same time. What just happened? 🤯

Consider the DataFrame pets, shown below.

| Species | Color | Weight | Age | |

|---|---|---|---|---|

| 0 | dog | black | 40 | 5.0 |

| 1 | cat | golden | 15 | 8.0 |

| 2 | cat | black | 20 | 9.0 |

| 3 | dog | white | 80 | 2.0 |

| 4 | dog | golden | 25 | 0.5 |

| 5 | hamster | golden | 1 | 3.0 |

Let's see what happens under the hood when we run pets.groupby('Species').mean().

show_grouping_animation()

pets = bpd.DataFrame().assign(

Species=['dog', 'cat', 'cat', 'dog', 'dog', 'hamster'],

Color=['black', 'golden', 'black', 'white', 'golden', 'golden'],

Weight=[40, 15, 20, 80, 25, 1],

Age=[5, 8, 9, 2, 0.5, 3]

)

pets

| Species | Color | Weight | Age | |

|---|---|---|---|---|

| 0 | dog | black | 40 | 5.0 |

| 1 | cat | golden | 15 | 8.0 |

| 2 | cat | black | 20 | 9.0 |

| 3 | dog | white | 80 | 2.0 |

| 4 | dog | golden | 25 | 0.5 |

| 5 | hamster | golden | 1 | 3.0 |

pets.groupby('Species').mean()

| Weight | Age | |

|---|---|---|

| Species | ||

| cat | 17.50 | 8.5 |

| dog | 48.33 | 2.5 |

| hamster | 1.00 | 3.0 |

It takes several steps to go from the original pets DataFrame to this grouped DataFrame, but we don't get to see any of Python's inner workings, just the final output.

states

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| ... | ... | ... | ... | ... | ... | ... |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

50 rows × 6 columns

states.groupby('Region').sum()

| Population | Land Area | Density | |

|---|---|---|---|

| Region | |||

| Midwest | 68985454 | 750524 | 1298.78 |

| Northeast | 57609148 | 161912 | 4957.49 |

| South | 125576562 | 868356 | 3189.37 |

| West | 78588572 | 1751054 | 881.62 |

# Note the use of .index – remember, the index isn't a column!

(

states

.groupby('Region')

.sum()

.sort_values(by='Population', ascending=False)

.index[0]

)

'South'

.groupby in general¶In short, .groupby aggregates (collects) all rows with the same value in a specified column (e.g. 'Region') into a single row in the resulting DataFrame, using an aggregation method (e.g. .sum()) to combine values from different rows with the same value in the specified column.

To use .groupby:

.groupby(column_name) will gather rows which have the same value in the specified column (column_name)..count(), .sum(), .mean(), .median(), .max(), and .min().states

| Region | Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|---|

| State | ||||||

| Alabama | South | Montgomery | 5024279 | 50645 | Republican | 99.21 |

| Alaska | West | Juneau | 733391 | 570641 | Republican | 1.29 |

| Arizona | West | Phoenix | 7151502 | 113594 | Republican | 62.96 |

| ... | ... | ... | ... | ... | ... | ... |

| West Virginia | South | Charleston | 1793716 | 24038 | Republican | 74.62 |

| Wisconsin | Midwest | Madison | 5893718 | 54158 | Republican | 108.82 |

| Wyoming | West | Cheyenne | 576851 | 97093 | Republican | 5.94 |

50 rows × 6 columns

states.groupby('Region').sum()

| Population | Land Area | Density | |

|---|---|---|---|

| Region | |||

| Midwest | 68985454 | 750524 | 1298.78 |

| Northeast | 57609148 | 161912 | 4957.49 |

| South | 125576562 | 868356 | 3189.37 |

| West | 78588572 | 1751054 | 881.62 |

Tip: look for keywords "per," "for each," and "indexed by" when solving problems.

states.groupby('Region').max()

| Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|

| Region | |||||

| Midwest | Topeka | 12812508 | 81759 | Republican | 288.77 |

| Northeast | Trenton | 20201249 | 47126 | Republican | 1263.12 |

| South | Tallahassee | 29145505 | 261232 | Republican | 636.37 |

| West | Santa Fe | 39538223 | 570641 | Republican | 253.81 |

12812508 / 81759 == 288.77

False

.count() aggregation method.states.groupby('Region').count()

| Capital City | Population | Land Area | Party | Density | |

|---|---|---|---|---|---|

| Region | |||||

| Midwest | 12 | 12 | 12 | 12 | 12 |

| Northeast | 9 | 9 | 9 | 9 | 9 |

| South | 16 | 16 | 16 | 16 | 16 |

| West | 13 | 13 | 13 | 13 | 13 |

Consider dropping unneeded columns and renaming columns as follows:

.assign to create a new column containing the same values as the old column(s)..drop(columns=list_of_column_labels) to drop the old column(s). .get(list_of_column_labels) to keep only the columns in the given list. The columns will appear in the order you specify, so this is also useful for reordering columns!states_by_region = states.groupby('Region').count()

states_by_region = states_by_region.assign(

States=states_by_region.get('Capital City')

).get(['States'])

states_by_region

| States | |

|---|---|

| Region | |

| Midwest | 12 |

| Northeast | 9 |

| South | 16 |

| West | 13 |

from IPython.display import YouTubeVideo

YouTubeVideo('xg7rnjWnZ48')

Before watching the video or looking at the solutions, make sure to try these problems on your own – they're great prep for homeworks, projects, and exams! Feel free to ask about them in office hours or on Ed.

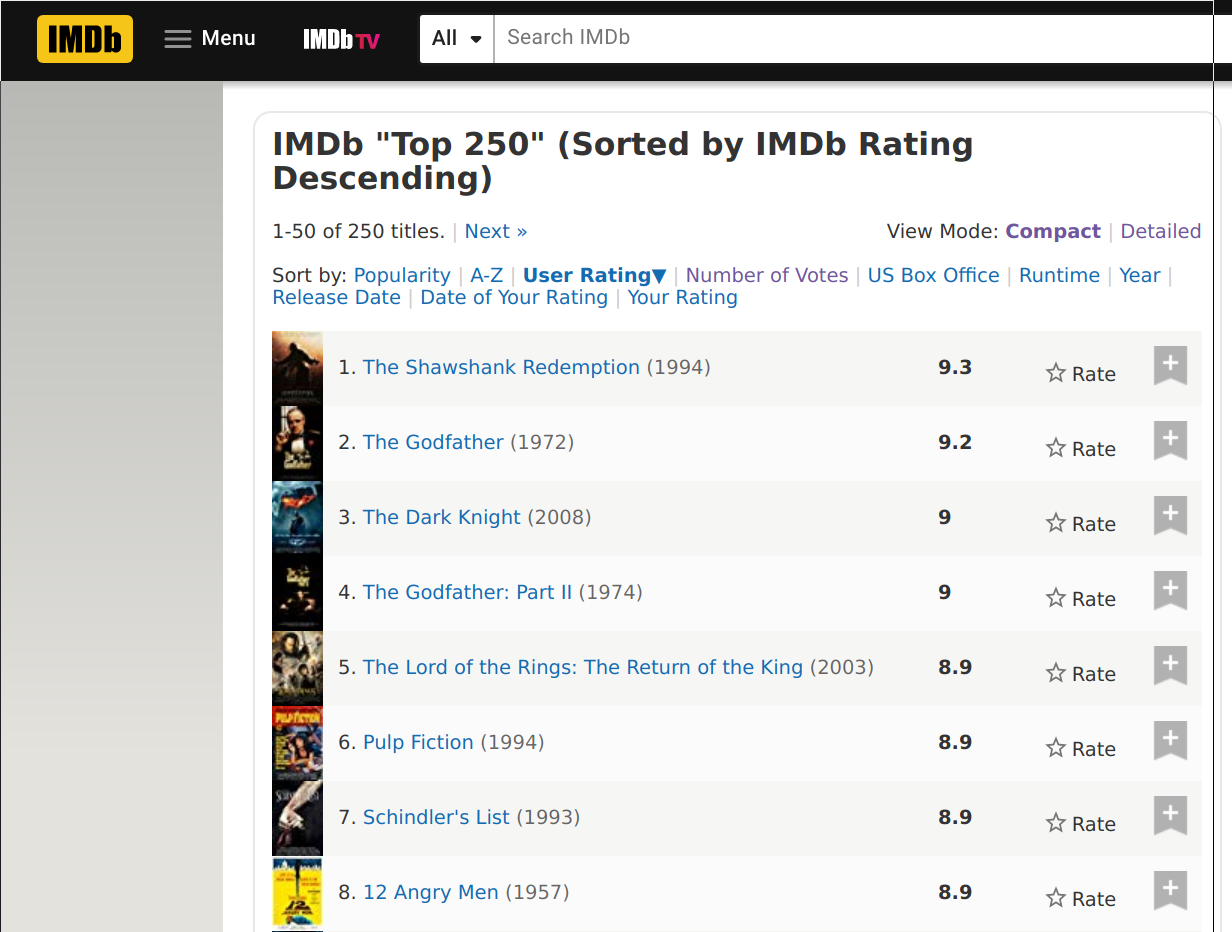

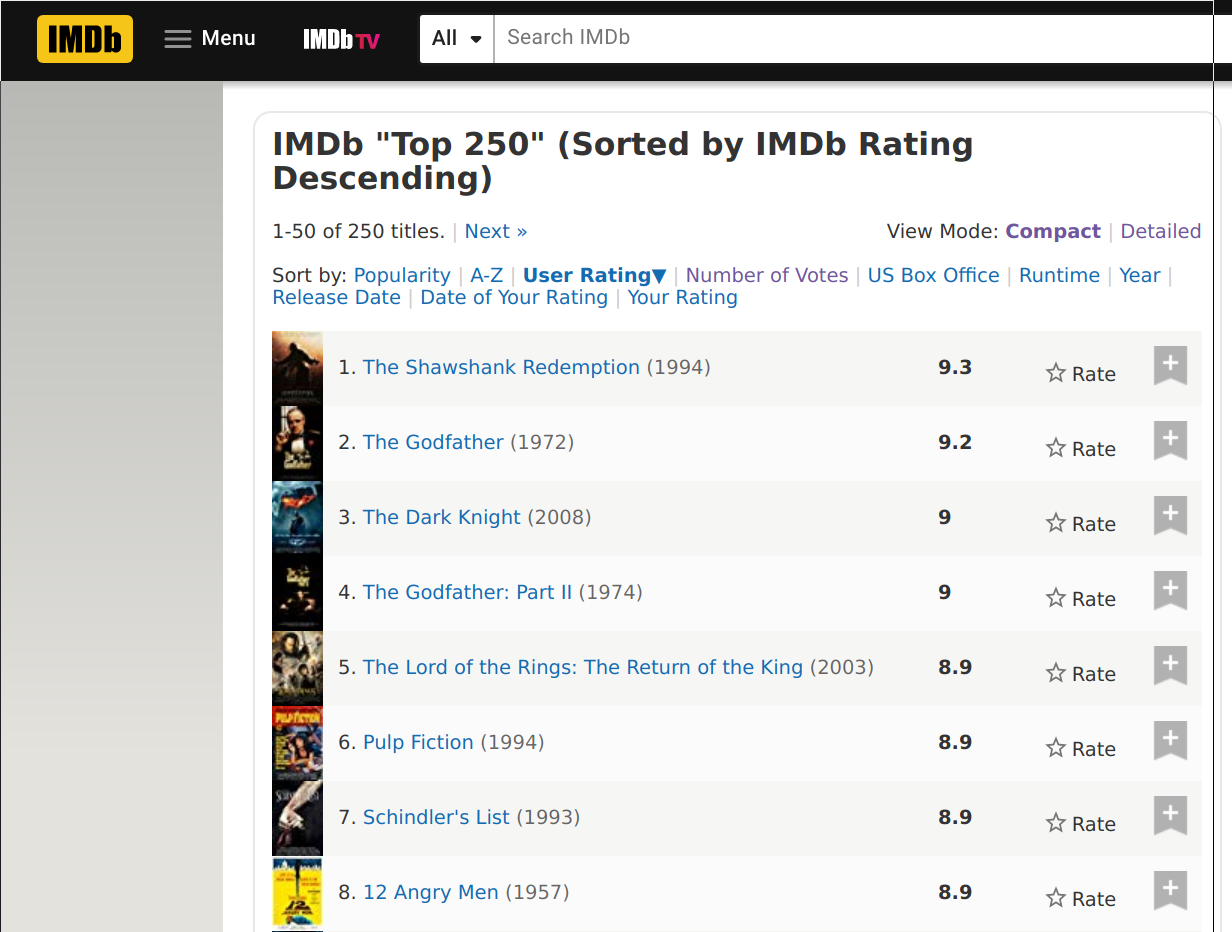

imdb = bpd.read_csv('data/imdb.csv').set_index('Title').sort_values(by='Rating')

imdb

| Votes | Rating | Year | Decade | |

|---|---|---|---|---|

| Title | ||||

| Akira | 91652 | 8.0 | 1988 | 1980 |

| Per un pugno di dollari | 124671 | 8.0 | 1964 | 1960 |

| Guardians of the Galaxy | 527349 | 8.0 | 2014 | 2010 |

| ... | ... | ... | ... | ... |

| The Godfather: Part II | 692753 | 9.0 | 1974 | 1970 |

| The Shawshank Redemption | 1498733 | 9.2 | 1994 | 1990 |

| The Godfather | 1027398 | 9.2 | 1972 | 1970 |

250 rows × 4 columns

imdb.groupby('Decade').count()

| Votes | Rating | Year | |

|---|---|---|---|

| Decade | |||

| 1920 | 4 | 4 | 4 |

| 1930 | 7 | 7 | 7 |

| 1940 | 14 | 14 | 14 |

| ... | ... | ... | ... |

| 1990 | 42 | 42 | 42 |

| 2000 | 50 | 50 | 50 |

| 2010 | 29 | 29 | 29 |

10 rows × 3 columns

# We'll learn how to make plots like this in the next lecture!

imdb.groupby('Decade').count().plot(y='Year');

Let's try to do this two different ways.

imdb[imdb.get('Decade') == 1990].sort_values('Rating', ascending=False).index[0]

'The Shawshank Redemption'

Note: The command to extract the index of a DataFrame is .index - no parentheses! This is different than the way we extract columns, with .get(), because the index is not a column.

imdb.reset_index().groupby('Decade').max()

| Title | Votes | Rating | Year | |

|---|---|---|---|---|

| Decade | ||||

| 1920 | The Kid | 98794 | 8.3 | 1927 |

| 1930 | The Wizard of Oz | 259235 | 8.5 | 1939 |

| 1940 | The Treasure of the Sierra Madre | 350551 | 8.6 | 1949 |

| ... | ... | ... | ... | ... |

| 1990 | Unforgiven | 1498733 | 9.2 | 1999 |

| 2000 | Yip Man | 1473049 | 8.9 | 2009 |

| 2010 | X-Men: Days of Future Past | 1271949 | 8.7 | 2015 |

10 rows × 4 columns

.max()), aggregation is done to each column individually. max is not helpful here.

good_movies_per_year = imdb[imdb.get('Rating') > 8.5].groupby('Year').count()

good_movies_per_year[good_movies_per_year.get('Votes') > 3].shape[0]

As mentioned below, you can also use:

(good_movies_per_year.get('Votes') > 3).sum()

.sum() on a boolean array¶True elements because Python treats True as 1 and False as 0.

# A Series of Trues and Falses; True when there were at least 3 movies on the list from that year

more_than_3_ix = imdb.groupby('Year').count().get('Votes') > 3

# The year with the highest average rating, among the years with more than 3 movies

imdb.groupby('Year').mean()[more_than_3_ix].sort_values(by='Rating').index[-1]

Hint: Use .str.len() on the column or index that contains the names of the movies.

(

imdb.assign(title_length=imdb.index.str.len())

.groupby('Year').mean()

.sort_values(by='title_length')

.index[-1]

)

The year is 1964 – take a look at the movies from 1964 by querying!

# A Series of Trues and Falses; True when there were at least 3 movies on the list from that year

more_than_3_ix = imdb.groupby('Year').count().get('Votes') > 3

# The sum of the ratings of movies from years that had at least 3 movies on the list

total_rating = imdb.groupby('Year').sum()[more_than_3_ix].get('Rating').sum()

# The total number of movies from years that had at least 3 movies on the list

count = imdb.groupby('Year').count()[more_than_3_ix].get('Rating').sum()

# The correct answer

average_rating = total_rating / count

# Close, but incorrect:

# Doesn't account for the fact that different years have different numbers of movies on the list

close_but_wrong = imdb.groupby('Year').mean()[more_than_3_ix].get('Rating').mean()

& if you require all to be true, or | if you require at least one to be true.df.groupby(column_name).agg_method() aggregates all rows with the same value for column_name into a single row in the resulting DataFrame, using agg_method() to combine values..count(), .sum(), .mean(), .median(), .max(), and .min().A picture is worth a 1000 words – it's time to visualize!